Executive Summary

- Who this is for: CIOs, CTOs, Enterprise Architects, AI Transformation Leaders

- Problem it solves: AI initiatives running as disconnected pilots without governance or structural clarity

- Key outcome: A practical framework to institutionalize AI across strategy, architecture, autonomy, and cost control

- Time to implement: 60–90 days

- Business impact: Reduced AI chaos, controlled autonomy, predictable cost growth, improved executive confidence

The Problem: AI Without an Operating Model

Most organizations are experimenting with AI.

Different teams:

- Use different models

- Build separate agents

- Integrate tools independently

- Run pilots without cross-review

Six months later:

- Costs fluctuate unpredictably

- Security escalations slow progress

- Compliance reviews increase friction

- Executives hesitate to scale

The issue is not capability.

The issue is structural absence.

AI is being treated like a tool.

It must be governed like a platform.

The Shift: From Experimentation to Institutional Capability

Pilots prove possibility.

Operating models enable scale.

Without an operating model:

- Autonomy increases without oversight

- Tool access expands without control

- Cost scales without accountability

- Strategy drifts from execution

Enterprise AI requires intentional structure.

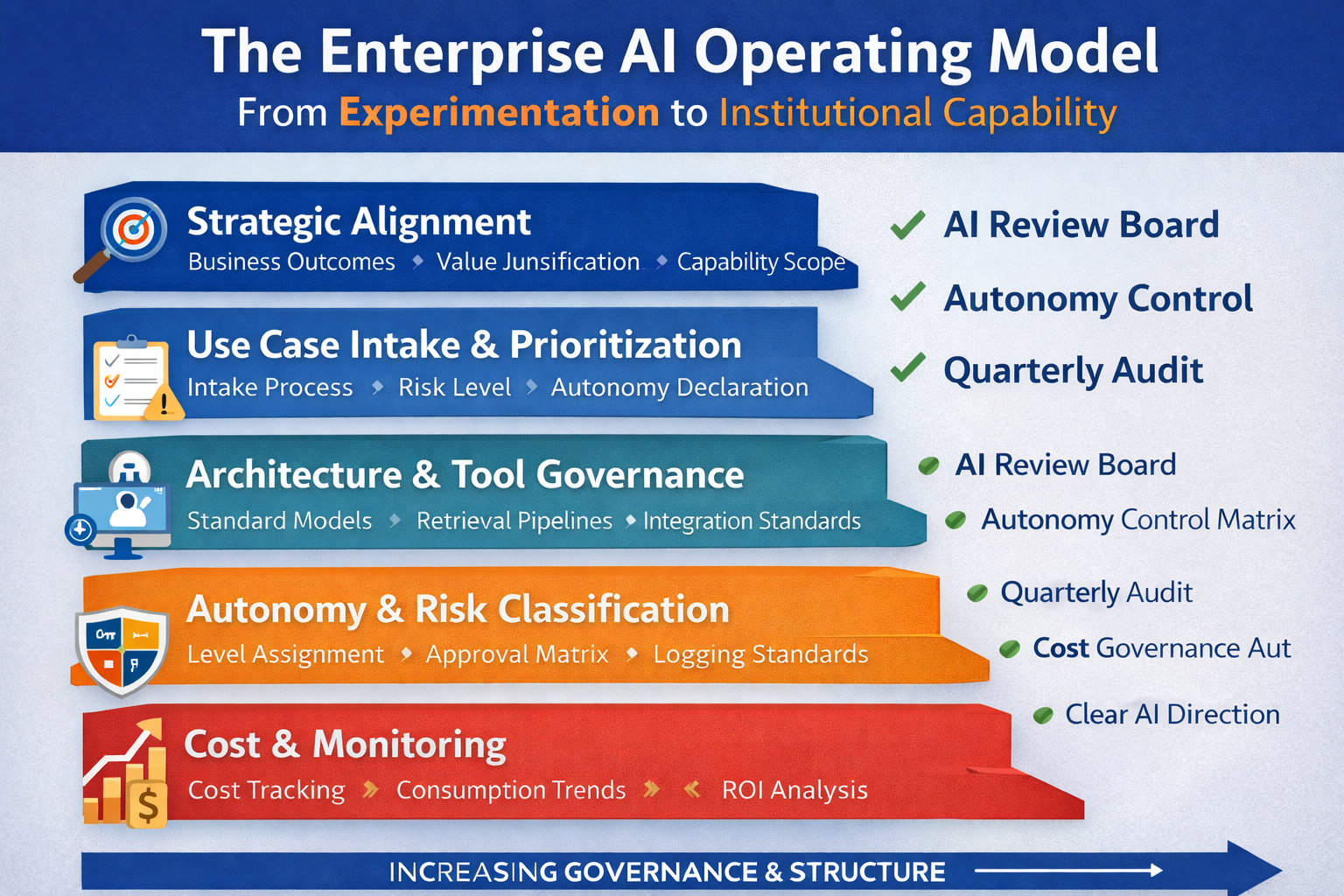

The Five Layers of an Enterprise AI Operating Model

Questions to answer:

- What business outcomes is AI expected to impact?

- Is AI cost justified by measurable value?

- Which capabilities are assistive vs autonomous?

AI initiatives must map to enterprise objectives.

Not curiosity.

Not hype.

2. Use Case Intake & Prioritization

Introduce a formal intake process:

- Business sponsor defined

- Risk classification assigned

- Autonomy level declared

- Data sensitivity identified

- ROI hypothesis documented

If a use case cannot pass intake review,

it should not move to development.

Discipline precedes innovation.

3. Architecture & Tool Governance

AI systems must follow architectural standards:

- Approved LLM providers

- Standardized retrieval pipelines

- Controlled tool exposure

- Defined integration patterns

- Logging requirements

AI must integrate into your architecture review process.

Not bypass it.

4. Autonomy & Risk Classification

Every AI capability must be classified as:

- Creation (Generative)

- Execution (Agent)

- Delegated Decision Authority (Agentic)

Each category requires:

- Defined boundaries

- Escalation rules

- Approval checkpoints

- Audit logging

Autonomy should never be implicit.

It must be explicitly approved.

5. Cost & Performance Monitoring

AI introduces variable cost dynamics.

You must monitor:

- Cost per workflow

- Token consumption trends

- Tool invocation frequency

- ROI per use case

- Failure rate and rework

AI without financial visibility becomes operational liability.

Cost governance is not optional.

Governance Structure Required

To institutionalize AI, establish:

1. AI Review Board

- Architecture representation

- Security & compliance

- Business sponsor

- Finance oversight

2. Autonomy Approval Matrix

Define:

- What AI may generate

- What AI may execute

- What AI may decide

3. Quarterly AI Structural Audit

- Tool access review

- Cost variance analysis

- Risk reclassification

- Sunset underperforming use cases

AI should be reviewed like cloud architecture or cybersecurity posture.

Implementation Roadmap (90 Days)

Phase 1: Inventory (Weeks 1–3)

- List all AI use cases

- Classify autonomy level

- Identify model providers

- Map integration points

Success Metric: Complete AI capability map

Phase 2: Structural Definition (Weeks 4–8)

- Formalize intake process

- Define autonomy approval rules

- Introduce logging standards

- Standardize architecture patterns

Success Metric: No AI initiative without governance pathway

Phase 3: Institutionalization (Weeks 9–12)

- Establish AI Review Board

- Introduce cost dashboards

- Integrate AI into enterprise architecture governance

- Conduct first structural audit

Success Metric: AI governed as enterprise capability

Evidence from Practice

Organizations that lack an AI operating model experience:

- Duplicate experimentation

- Inconsistent compliance responses

- Executive resistance to scaling

- Unpredictable cost growth

Organizations that institutionalize AI governance experience:

- Faster approvals

- Improved budget clarity

- Stronger executive trust

- Controlled autonomy expansion

Clarity increases confidence.

Confidence enables scale.

Action Plan

This Week

Ask three questions:

- Who owns AI governance in your organization?

- How many autonomous systems are currently active?

- Can you calculate cost per AI workflow?

If answers are unclear,

you do not yet have an operating model.

Next 30 Days

Establish:

- AI intake process

- Autonomy classification framework

- Architecture review alignment

3–6 Months

Integrate AI into:

- Enterprise architecture governance

- Risk review processes

- Budget planning cycles

- Executive reporting dashboards

Scale AI only after you structure it.

Final Thought

Architecture defines structure.

Autonomy defines authority.

The Operating Model defines control.

Enterprise AI success does not come from better models.

It comes from better governance.

Next Step

If your organization is moving beyond AI experimentation and needs structural clarity:

→ Book a 30-minute strategy consultation

AI transformation succeeds when experimentation becomes institutional capability.